Looking beyond basic cloud economics: Part Four

The case for standardization

By James Anthony, CTO at Inoapps

We finish this blog series with possibly the simplest benefit of moving to cloud infrastructure, but one that often results in the most heated debate… the business gains that can be realized from standardization.

A few years back, I spent a lot of my time helping customers migrate to Oracle Engineered Systems platforms, mostly Oracle Exadata. In addition to the secret sauce performance features, one of the benefits we espoused was that of standardization from the perspective of supportability. Each Exadata that left the factory (of a given model number) came with the same hardware components: processors, hard drives, network and InfiniBand/RoCE cards and switches etc. to eliminate potential conflicts between operating system and hardware (which by this point in time had admittedly become exceedingly rare). Beyond this, each Exadata also ran the same operating system, both in terms of release and supplied RPMs and from a configuration perspective.

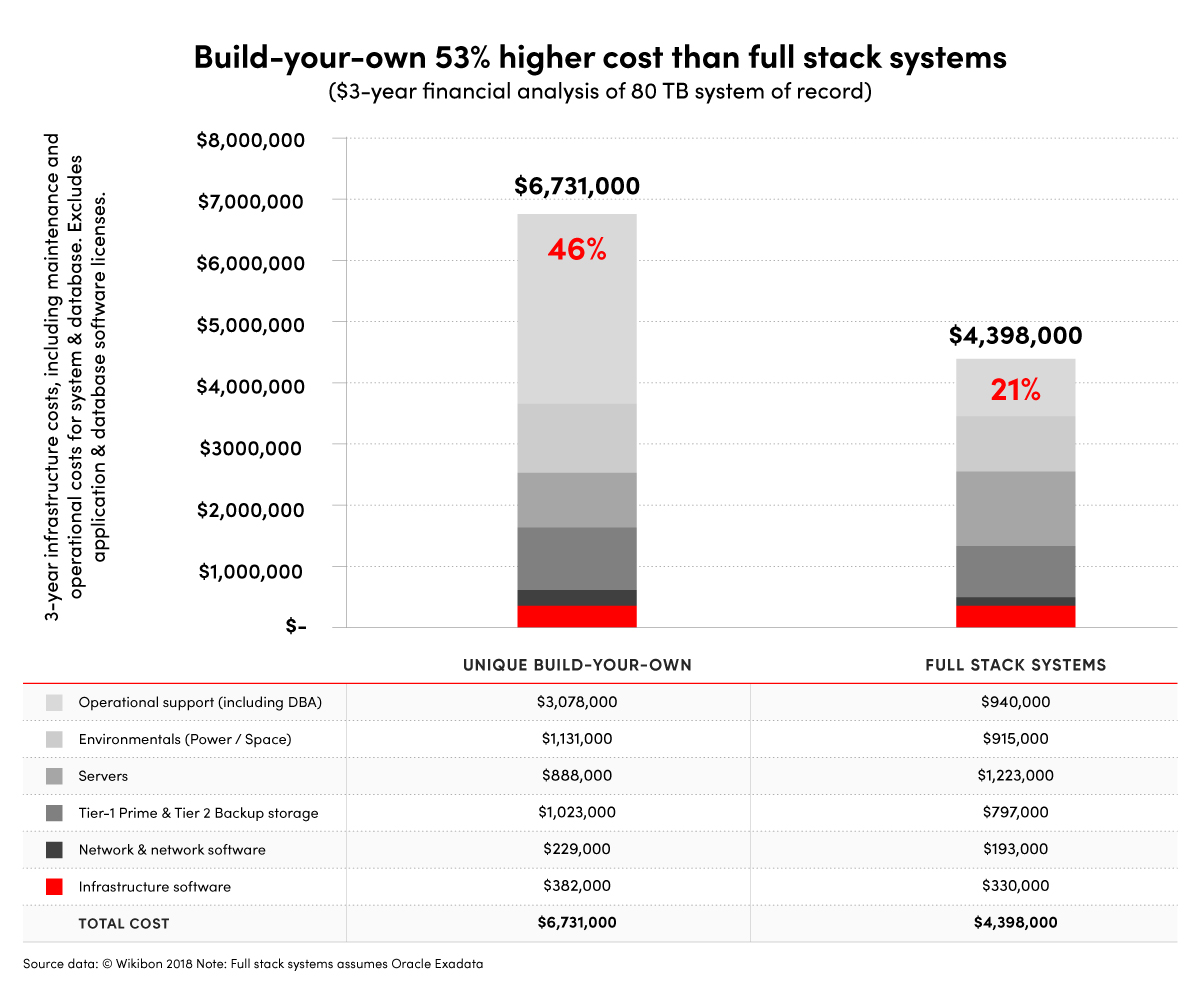

While some hardware vendors played the card of ’you can as easily build it yourself’ (which only rang true for the physical hardware and not the Exadata software) and Oracle often over played the labor savings to be had from installing and cabling hardware, you can’t argue with the fact that something that came preconfigured with a defined, well known set of hardware and software components didn’t provide a lower risk profile to something pulled together bespoke. Importantly, this freed up resource to focus on the deliverable and not the inputs.

Any time we reduce the operator inputs required represents a clear cost reduction, both in terms of the project itself and the opportunity cost of diverting a resource from higher value work.

With standardization, we have safety in numbers. The chances of us finding an issue that doesn’t exist elsewhere and hasn’t already been solved is significantly lower. Conversely, when we find a problem, it’s much more in Oracle’s interest to resolve the problem as we aren’t a niche case. Solving it for us solves it for every other customer too.

This is our second operational cost saving, but one that is considerably harder to quantify: the reduction in the volume of issues encountered and the reduction in time and effort associated with resolving those issues.

Common objections to standardization

So why the contention? Put bluntly, many clients—or more typically people within their IT departments—have the belief that their system is unique and wouldn’t work with a standardized footprint. Now, firstly let me be clear. I’m in no way saying that the operations each business performs with their database isn’t unique. It may very well be so. What I do wholeheartedly contend is that the underlying platform used to support these operations does not need to be unique, and that the extra ’tweaks’ implemented are still fine within a standardized platform. Let’s look at a few cases:

- “We install something special (such as security scanning software) on our servers.” Fine! There’s nothing to stop you doing that. It may well be that your cloud provider has something that does the same thing, potentially for free, but there’s nothing to stop you from implementing your own preferred solution.

- “We run the application on the same tier as the database.” Well, here we probably do open a can of worms… with the first question being why? If it’s about latency between the database and the application, are you really looking at what modern networking has to offer in terms of both bandwidth and latency? If it’s to reduce the number of servers required, then virtualization solves that issue and we gain the security benefits of segregation.

- “We tweak some very specific parameters for performance.” Here I’d argue that if you’re looking to tweak OS settings for performance… well a) we can still do that, but b) how much performance gain do we really get from that? And how much of that is offset with a move to a newer generation of CPU, memory, NVMe storage and networking? If we’re looking to bypass a bottleneck, is that bottleneck actually still there?

The other benefits of standardization are just as relevant to other industries as they are in IT, and largely focus on knowledge and the retention of knowledge:

- New administrators coming in will instantly ’know’ the platform with a far smaller learning curve and be able to add value immediately.

- The dependency on that one person who helped build the system and always needs to get involved is removed. Even where some small element of re-architecting is needed, it’s worth it. In these days, with the employment market more fluid than ever, this is invaluable to business continuity.

- Consistent quality of deployment, which brings with it benefits around security/compliance and reduced effort. When my job was to deploy systems for customers, a constant ask was for them to be ’built to best practice’ and often explicitly to Oracle best practice. By using the vendor’s build, we are implicitly building to their best practice and thereby ensuring that this is our minimum quality baseline as we roll out across the organization.

Over the last few months, I’ve talked about why I think that the benefits of the journey to cloud infrastructure are about more than the economics of one data center over another, and should focus on the technological and business values it can provide. Whether this is streamlining operations, reducing costs, or factoring out risk, I would contend that all of these allow us to align more tightly with the paradigm of IT empowering the business and focusing on outcomes.

There is always comfort in the familiar, with the tweaks and customizations we’ve become so used to over the years. But as we face the latest wave of IT transformation and the complexities that come with it, it’s increasingly apparent that we’re better served embracing the firm foundations of standardization rather than standing like King Kanute hoping to push back the tide.